k-d Forests

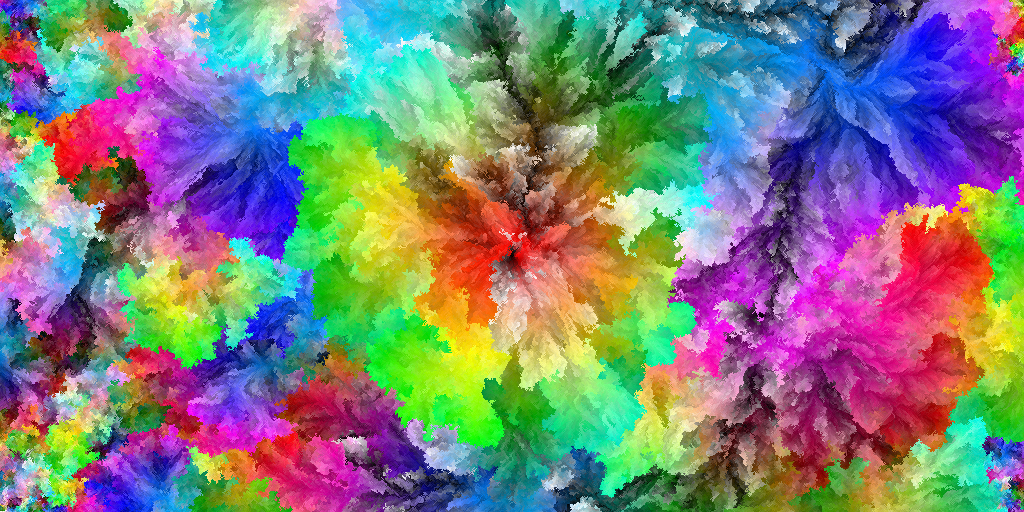

Recently I saw an interesting Code Golf problem: create an image using all possible RGB colours. The top ranked submission, by József Fejes, contained some truly beautiful images, but they took an immense amount of time to generate.

As described in the answer and a follow-up blog post, the images were generated by putting a random colour in the centre, then placing the next colour adjacent to the most similar colour, repeatedly. The submitted code used exhaustive search over the boundary pixels to find the best location; using some geometric data structures, we can do a lot better.

The problem of finding where to place the current pixel amounts to finding the nearest neighbour to the colour being placed, using Euclidean distance over the RGB colour space. The most common data structure for nearest neighbour search is the k-d tree, but they don’t perform well in the face of rapid insertion and removal. Since the boundary changes every time we place a pixel, we’ll need a more dynamic data structure.

Usually R-trees are used for problems like these, but I came up with another idea. Instead of a single k-d tree, we can use a collection of k-d trees (hence the name “k-d forest”) with increasing power-of-2 sizes. It looks like this:

Rather than re-build a large tree on every insertion, we usually only have to rebuild the first few small trees in the forest. Since k-d trees can be built in time, we get this performance for n insertions:

Allowing node removal destroys this nice amortized performance though, because you could repeatedly remove and add a node right when the largest tree has to get rebuilt.

So we cheat: instead of really deleting nodes, simply set a “removed” flag to true.

To avoid wasting time searching through removed nodes, we can rebuild the whole forest without them once they reach, say, 50% of the total nodes.

Query performance is also nice. Since a single k-d tree takes on average to answer a nearest-neighbour query, and we have up to trees, our average running time per query is

So what’s the real-world performance like? Well, it takes only about four minutes to generate a 4096×4096 image using all possible 24-bit colours. A straight-across comparison isn’t exactly fair due to me using C instead of C♯, but the original code took dozens of hours to generate the same images.

Rather than making the images available, I’ve hosted the code here.

It takes about as long to generate the images (even for the full 24 bit spectrum) as it would to download them anyway.

Simply checkout the code and run make image to generate an image.

You can customize some #defines in main.c to generate different kinds and sizes of images.

EDIT: Since József Fejes’s 24-bit images aren’t up anymore, I’ve shared a Google Drive folder with mine here.

Thanks to József Fejes for the concept.

Comments

There is some more info on this sort of thing at http://en.wikipedia.org/wiki/Dynamization, http://wwwisg.cs.uni-magdeburg.de/ag/lehre/SS2009/GDS/slides/S12.pdf, and http://www.mpi-inf.mpg.de/~mehlhorn/ftp/mehlhorn27.pdf.

Thanks for the links! I’ve seen the technique applied before but it’s interesting to see the black-box analysis.

Hey, I encountered some errors with building this, and I wanted to share how I resolved them.

Basically, running

makeyielded linker-errors saying it couldn’t find references to a variety of functions in libpng. To fix this, I had to modify the make command to be:

cc -std=c99 -pipe -O2 -Werror -Wall -Wpedantic -Wextra -Wno-sign-compare -Wno-unused-parameter -Wunreachable-code -Wshadow -Wpointer-arith -Wwrite-strings -Wcast-align -Wstrict-prototypes -lm -lpng -o kd-forest kd-forest.c util.c color.c main.c -lpngThe change is the addition of

-lpngon the end. That resolved my compilation problem.Yeah it’s good practice to put the -l*s after the source files but I forgot. Fixed now, thanks!

Gregor_TL

How did you generate *hue and *hue2 images? What makes them so different?

You can see in József’s hue-sorted images that there are some artefacts near the edges. This happens because there are naturally “holes” in the image as it’s generated, and by the time the purple pixels are being placed, those holes are the only empty places for them.

In the *hue.png images, I’ve tried to mitigate it by doing multiple passes through the sorted colours. You can see that in the code here. That’s why you see multiple “rings” of the entire spectrum.

In the *hue2.png images, I’ve used a better method. Instead of multiple evenly-spaced stripes, I do every other colour, then every 4th, then every 8th, 16th, etc. You can see that in the code here.

sammko

Hi, I rendered a 22-bit animation (2048x2048) and cropped it down to 1080p. You can see the result on YouTube. Thanks for the algorithm :D

MrNosco

You shouldn’t use uint8_t pixel[3] notation in function arguments.

It might look like sizeof(pixel) should be sizeof(uint8_t[3]), but it’s actually sizeof(uint_t*).Yeah I read this thread too. But because this is my code, I get to have my own opinions on what I should and shouldn’t do. The

[3]is useful documentation. You’d have a point if I actually usedsizeof(pixel)anywhere, but I don’t.

Henry

Hey I’m trying to do something similar a, but I have a question for you.

I understand how the minimum selection would be implemented with the KD-tree by querying for a nearest neighbor to the target color and then placing it in a randomly chosen adjacent location, but how did you do the average selection?

If you were to compute the average color for a location using it’s 8 neighbors then you could nearest neighbor between the target and the average and place at the resulting spot. But I think that gives a slightly different result to the original guy who minimized the average of the distances to the neighbors , rather than minimizing the distance to the average of the neighbors.

Am I correct in thinking your averaging scheme is slightly different to the original guy’s, or if not how did you do nearest neighbor search for the average selection case?

You’re right! I didn’t realize that’s what the original code did. Actually it looks like the original code computes the average of the *squared* distances.